xAI Colossus Supercomputer 2026: 300,000+ GPUs, Grok 5 Training & Elon Musk Latest Update

xAI Colossus 2026: world's largest AI cluster with 300k+ GPUs, Grok 5 training progress, Memphis data center specs & Elon Musk plans. Latest update.

XAI

2/1/20262 min read

February 2026 — xAI’s Colossus is no longer just a concept. It’s already the world’s largest AI training cluster and still growing fast. With over 100,000 Nvidia H100 GPUs online and plans to reach 300,000+ in 2026, Colossus is powering Grok 5 development and positioning xAI to challenge OpenAI, Google, and Meta.

Here’s the latest on specs, timeline, training impact, and what Elon Musk has said recently.

Current Scale & Specs (Feb 2026)

GPUs: 100,000+ Nvidia H100 (Phase 1 live since late 2025)

Expansion target: 200,000–300,000+ (H200 + B200 mix) by mid-to-late 2026

Location: Memphis, Tennessee (massive data center built in record time)

Power usage: ~150–250 MW (equivalent to a small city)

Networking: Custom high-speed fabric for ultra-low latency training

Cooling: Advanced liquid cooling to handle extreme density

Musk has repeatedly called Colossus “the most powerful training cluster by every metric” — and early benchmarks support that claim.

Why Colossus Matters for Grok

Grok 5 training: Colossus is the main supercomputer behind Grok 5 (rumored 6–8 trillion parameters, Truth Mode 2.0, native video/audio).

Speed advantage: Massive GPU count + optimized stack = faster iterations than competitors.

Real-time X data: Cluster pulls live data from X (Twitter) → Grok stays current while others lag.

AGI path: Musk says Colossus is key to xAI’s goal of “understanding the universe” — Grok 5 is the next big step.

Timeline & Future Plans

Late 2025: Phase 1 (100k H100 GPUs) online

Q1–Q2 2026: Scale to 200k+ GPUs (H200/B200 rollout)

Mid-to-late 2026: Target 300k–500k GPUs → Colossus 2 phase

Long-term: Musk wants xAI to lead in raw compute power for years

Elon Musk’s Latest Comments

“Colossus is already the most powerful training cluster in the world, and we’re just getting started.” (recent X post)

Teased “Colossus 2” with even higher density and next-gen chips

Goal: Make xAI compute-independent and outpace OpenAI/Google

Verdict – February 2026

Colossus isn’t hype — it’s real, it’s massive, and it’s accelerating Grok 5 toward potentially dominating 2026. If the 300k GPU target is hit on schedule, xAI could leapfrog competitors in raw model capability.

Winner right now: xAI Colossus (raw training speed for Grok 5 + real-time X integration gives it the edge in 2026).

What do you think — will Colossus make Grok 5 the most powerful AI this year? Drop your take below!

Follow for more xAI, Grok, Tesla Optimus, Starship & Elon Musk updates.

#xAI #Colossus #Grok5 #ElonMusk #AISupercompute

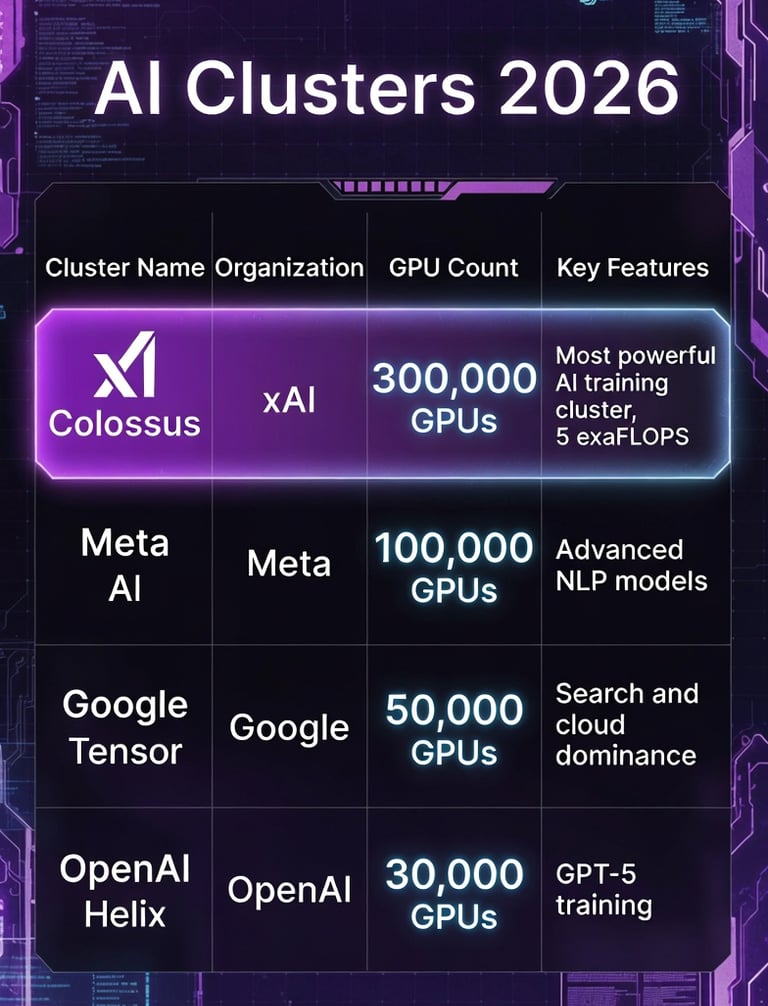

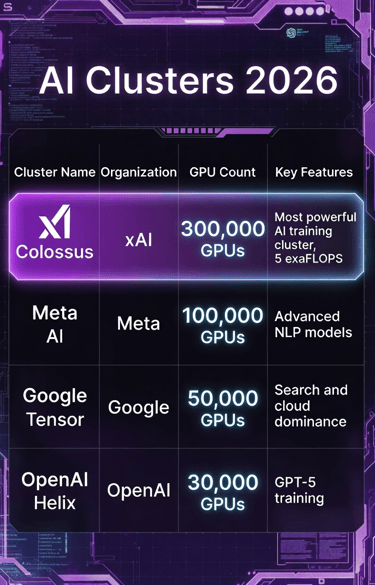

Quick Comparison Table – 2026 AI Clusters

Copyright © 2025 Grok Musk World

Connect with us

Prefer privacy-first search? Find us on DuckDuckGo.com, Brave.com Search & Kagi.com : grokmuskworld.com